A major lawsuit in California is raising AI privacy alarms right here in San Diego and Orange County. It claims local healthcare providers used AI to record doctor-patient discussions. And they didn’t ask permission first. This case addresses an AI privacy issue that affects our community.

Think about this:

Estimated reading time: 6 minutes

Table of contents

- What’s Going on with Sutter Health and Memorial Care?

- Why Use Tools Like Abridge Medical Conversation AI?

- AI Privacy: What About Patient Privacy?

- What Laws Are We Talking About?

- How AI Helps Doctors with Billing and Documentation

- This Is Part of a Bigger AI Privacy Issue

- So, What’s the Balance, What Should Patients Do?

- What’s Next?

- Bottom Line

- Read the Full Complaint Below

- Common AI Privacy Questions

- Case Info

- Further Reading on AI Privacy

What’s Going on with Sutter Health and Memorial Care?

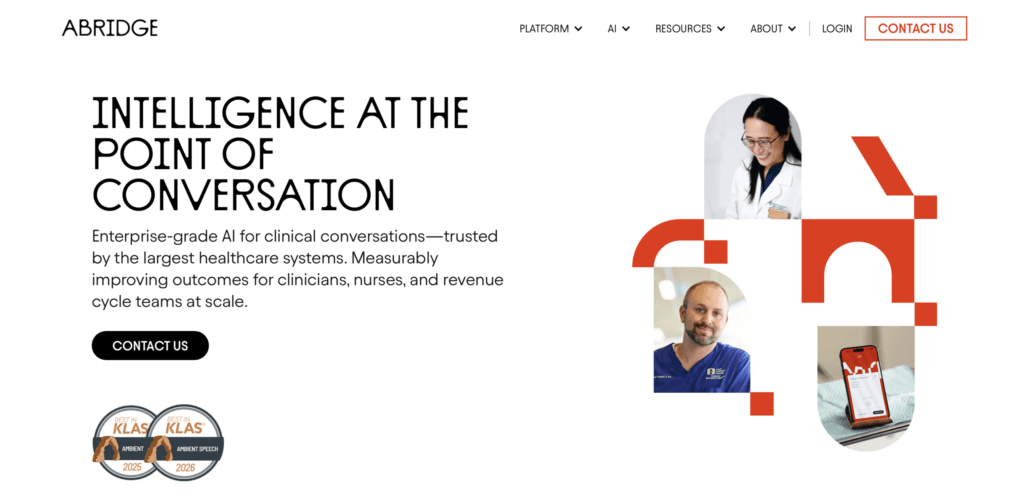

Sutter Health and Memorial Care, both serving patients in San Diego and Orange County, are at the center of this case. They used an AI tool called Abridge AI. It listens to doctor visits, records everything, and then sends it to outside servers. The AI turns the audio into notes for medical records.

Sounds helpful, right? But here’s the problem: patients say they never knew this was happening. No one asked if it was okay. Their private health conversations were recorded without their say-so.

Watch the video below to learn more about how Abridge’s Medical Conversation AI summarizes recorded visits.

Did You Know

Many healthcare facilities in San Diego and Orange County use Abridge AI. Some are listed below.

Why Use Tools Like Abridge Medical Conversation AI?

Here’s how AI helps local doctors:

1. Reduces paperwork by automatically writing notes

2. Gives doctors more time with patients and less time typing

3. Improves the accuracy of medical records

4. Pulls important info from electronic health records and patient data

5. Helps doctors make better decisions

6. Reduces mistakes caused by rushed or incomplete note-taking

7. Catches details that might otherwise get missed

AI Privacy: What About Patient Privacy?

Here’s the catch. When AI records private conversations, more people and machines gain access to sensitive info. That’s a big AI privacy issue. Patients in San Diego and Orange County expect their medical conversations to stay private. Secret recordings feel like a betrayal of trust.

What Laws Are We Talking About?

| California Invasion of Privacy Act (CIPA) | Everyone in the conversation must agree before recording. |

| Confidentiality of Medical Information Act (CMIA) | Medical info can’t be shared without okay from the patient. |

| Federal Wiretap Act (ECPA) | No recording or sharing of private discussions without the patient’s knowledge. |

The lawsuit claims the AI recordings broke all these rules.

How AI Helps Doctors with Billing and Documentation

Privacy concerns matter, but AI tools like Abridge AI also help doctors in important ways. For example, they help ensure that medical notes meet insurance billing requirements. As one Abridge AI spokesperson explained:

“We figured out that this patient has this insurance plan in this geography, this part of the country, and thus your note needs to look like this.”

This means the AI understands the specific billing rules for each patient’s insurance and location. It helps doctors format their notes correctly, which reduces errors and makes sure doctors are paid properly for their care.

Doctors document not only for medical accuracy but also to support billing and revenue cycles. AI helps by taking some of this work off their shoulders, allowing them to spend more time with patients.

Looking for a cheap domain name?

Get it from Namecheap! Prices start at $3.98/year

This Is Part of a Bigger AI Privacy Issue

This fight isn’t just about local hospitals. It’s about how AI is changing privacy everywhere.

Take Anthropic, an AI company. They’re fighting the U.S. government over AI surveillance. The government wants to use AI to watch people. Anthropic says, “No way.” This shows how AI can threaten privacy on a big scale.

So, What’s the Balance, What Should Patients Do?

AI can help doctors and patients a lot. But it can also put privacy at risk. Ask questions! If you’re at the doctor’s and AI might be recording, ask if you can opt out. You have a right to choose. Share your opinion in our poll below: Do you think we should be using these AI tools?

What’s Next?

Hospitals in San Diego and Orange County need to be clear about when AI is recording. Patients should get a choice to say yes or no. And data must be locked down tight.

This lawsuit shows we need better rules for AI in healthcare, especially in San Diego and Orange County. Clear consent, strong security, and respect for privacy are a must.

Local lawmakers and healthcare leaders are paying attention. They know AI is growing fast in medicine. But laws need to catch up.

Abridge AI has not filed an answer to the complaint.

Bottom Line

AI is changing medicine fast. It can make care better, but also risks your private info.

This case makes us think: How do we use AI without giving up privacy? The answer matters for all of us here in San Diego and Orange County.

Read the Full Complaint Below

Common AI Privacy Questions

Q: How can I tell if my doctor is using AI to record my visits?

A: Ask your doctor or the clinic staff directly. They should tell you if AI tools are in use. Look for signs or notices in waiting rooms or check patient forms for consent information.

Q: What should I do if I want to refuse AI recording during my appointment?

A: You can say no. Tell your doctor or nurse you don’t want your conversation recorded by AI. Clinics should have an opt-out process. If they don’t, ask for it.

Q: How is my recorded health information stored, and who can access it?

A: Hospitals should keep recordings secure and limit access to authorized staff only. Ask your provider how they protect your data and who can listen to or use your recordings.

Q: If my privacy was violated, what actions can I take, or who can I contact?

A: You can file a complaint with the California Department of Health or the U.S. Department of Health and Human Services. You may also want to consult a lawyer, especially if you think laws like CIPA or CMIA were broken.

Q: Are all local clinics and hospitals using this AI tool, or just some?

A: Not all clinics use AI recording tools. The lawsuit focuses on Sutter Health and Memorial Care, but other providers may or may not use similar tech. Always ask your provider about their policies.

Case Info

Case Name: Washington et al. v. Sutter Health et al.

Case Number: 4:26-cv-03012-KAW

Court: U.S. District Court, Northern District of California